|

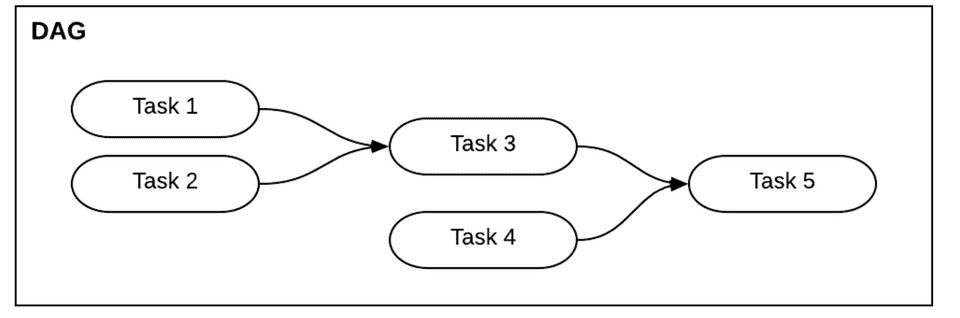

12/3/2023 0 Comments Airflow dag dependencyTo create our first DAG, let’s first start by importing the necessary modules: For example, defining a job with a Python Operator means that the investigation consists of running Python code. What each task does is determined by the task’s operator. In Airflow, a DAG is a Python script containing a set of tasks and their dependencies.

This mechanism enables a user to assign her SLA timeout to her DAG effectively, and if even one of the DAG tasks takes longer than her SLA timeout specified, he will tell Airflow the user will notify you. Detect long-running tasks with SLAs and alerts: Airflow’s SLA (Service Level Agreement) mechanism allows users to track job performance.Each collection has a set number of slots that offer access to the associated resource. Airflow uses resource pools to regulate how many jobs can access a resource. Managing Concurrency using Pools: When performing many processes in parallel, numerous tasks may require access to the same resource.As a result, properly managing resources can aid in the reduction of this burden. When dealing with large volumes of data, it can overburden the Airflow Cluster. The easiest way around this problem is to use a shared memory that all her Airflow employees can access and perform tasks simultaneously. As a result, Airflow runs multiple tasks in parallel, and downstream tasks may need help accessing it. Don’t store data on your local file system: When working with data in Airflow, you may want to write data to your local system.

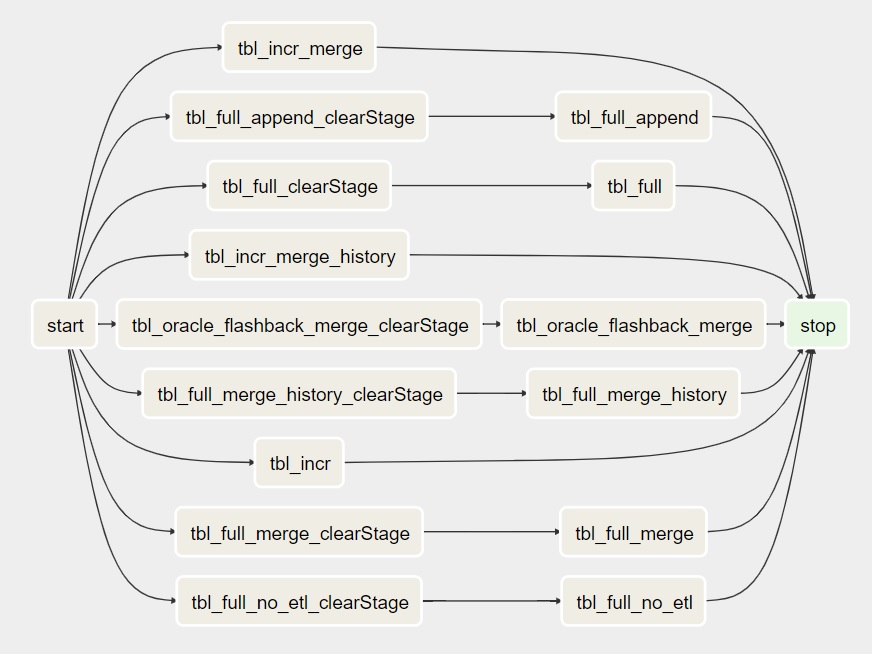

Users can take advantage of incremental processing by running filter/aggregate processes at the overall process stage and performing extensive analysis of the reduced output. Incremental Processing: The main idea behind incremental processing is to split the data into (time-based) ranges and process each DAG run independently.It includes thoroughly examining data sources and assessing whether they are required. Limit the data processed: Limiting data processing to the minimum amount of data necessary to achieve the intended result is the most effective approach to data management.Practical programming is writing computer programs that treat computation primarily as an application of mathematical functions and avoid modifying data or changing states.Īirflow DAGs that process large amounts of data should be carefully designed to be as efficient as possible. Designing studies using a functional paradigm: It’s easier to create jobs using a functional programming paradigm.A deterministic job should always return the same output given its input. Task results should be deterministic: Creating reproducible tasks and DAGs should be deterministic.No matter how often you run an idempotent job, the result is always the same.

Idempotence ensures consistency and resilience in the face of failures. Tasks must always be idempotent: Idempotence is one of the essential properties of a good airflow task.It means the user can rerun the job and get the same results, even if the study ran at a different time. Task groups effectively divide tasks into smaller groups to make the DAG structure more manageable and easier to understand.Īside from developing good DAG code, one of the most demanding parts of creating a successful DAG is making tasks reproducible. The new Airflow 2 workgroup feature helps manage these complex systems. Group tasks together using task groups: Complex DAG airflow can be challenging to understand due to the number of operations required.Fortunately, getting connection data from the Airflow connection store makes it easy to persist credentials for custom code. Centralized credential management: As the Airflow DAG interacts with various systems, many other credentials generate, such as databases, cloud storage, etc.When writing code, the easiest way to make it more transparent and understandable is to use commonly used styles. Use style conventions: Adopting a consistent and clean programming style and applying it consistently to all Apache Airflow DAGs is one of the first steps to creating clean and consistent DAGs.For example, DAG code can quickly become unnecessarily complex or difficult to understand, especially when the team members make their DAG vastly different programming styles. It’s easy to get confused when creating an Airflow DAG.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed